AI Has Reached Research Mathematics. Not as a Genius. As a Very Strange Junior Collaborator.

Gowers and Tao are saying the quiet part aloud: mathematicians are not disappearing, but the job description is changing.

For a long time, the safe thing to say about AI in mathematics was that it was useful for toy problems, olympiad tricks, and producing confident nonsense in LaTeX.

A pleasingly comfortable view.

Also increasingly obsolete.

Timothy Gowers, a Fields Medalist, recently wrote about an experiment with ChatGPT 5.5 Pro that forced him to revise his estimate of LLM mathematical ability sharply upward. His phrase was not “it helped a bit.” It was that the model produced “a piece of PhD-level research in an hour or so,” with no serious mathematical input from him. That is not the sort of sentence mathematicians write lightly, especially not British ones.

The setup was almost annoyingly simple.

Gowers took several open problems from a recent paper by Mel Nathanson in additive number theory and gave them to the model. No elaborate prompt engineering. No hidden human proof smuggled under the table. No grand ritual involving seventeen agents and a YAML file. Just the problems, the model, and a mathematician watching with the facial expression of someone realizing the machine is no longer doing party tricks.

The first problem concerned whether a known construction could be improved. ChatGPT thought for 17 minutes and 5 seconds and returned a construction giving a quadratic upper bound, which Gowers describes as “clearly best possible.” Then, when asked, it turned the argument into a LaTeX-style mathematical preprint in a little over two minutes.

This is already uncomfortable.

But the second case is the one that matters.

The model then worked on a more serious question related to a recent result by Isaac Rajagopal, an MIT student. Rajagopal had proved an exponential bound. Through a few iterations, ChatGPT first improved the bound, then pushed it all the way to a polynomial bound. Rajagopal later said the result looked “almost certainly correct,” not merely line by line, but at the level of ideas. More importantly, he said the model had found an original and clever idea, the sort he would have been proud to arrive at after a week or two of thinking. The model found and proved it in under an hour.

This is where the conversation changes.

Because this is not “the model remembered a theorem.”

It is not “the answer was already in the literature.”

It is not “AI searched Stack Exchange with better manners.”

It is a model making a new mathematical move inside a live research area, then producing something that expert humans judged as very likely correct and conceptually meaningful. Gowers himself raises the obvious institutional question: if such a result would be publishable if written by a human, what exactly should the mathematical community do with it? Journals? arXiv? A new moderated repository for AI-produced results? Human certification? Formal proof assistants? None of this has a neat answer yet.

And this is why the usual arguments are starting to sound antique.

“AI cannot do real mathematics.”

Well, apparently sometimes it can.

“AI will replace mathematicians.”

No, that is still the childish version.

The more interesting and more annoying truth is that AI is becoming useful at the exact layer where research begins to accelerate: checking ideas, trying variations, improving bounds, finding overlooked constructions, producing preprint-shaped drafts, searching adjacent arguments, and occasionally stumbling into a genuinely clever move before the human has finished making tea.

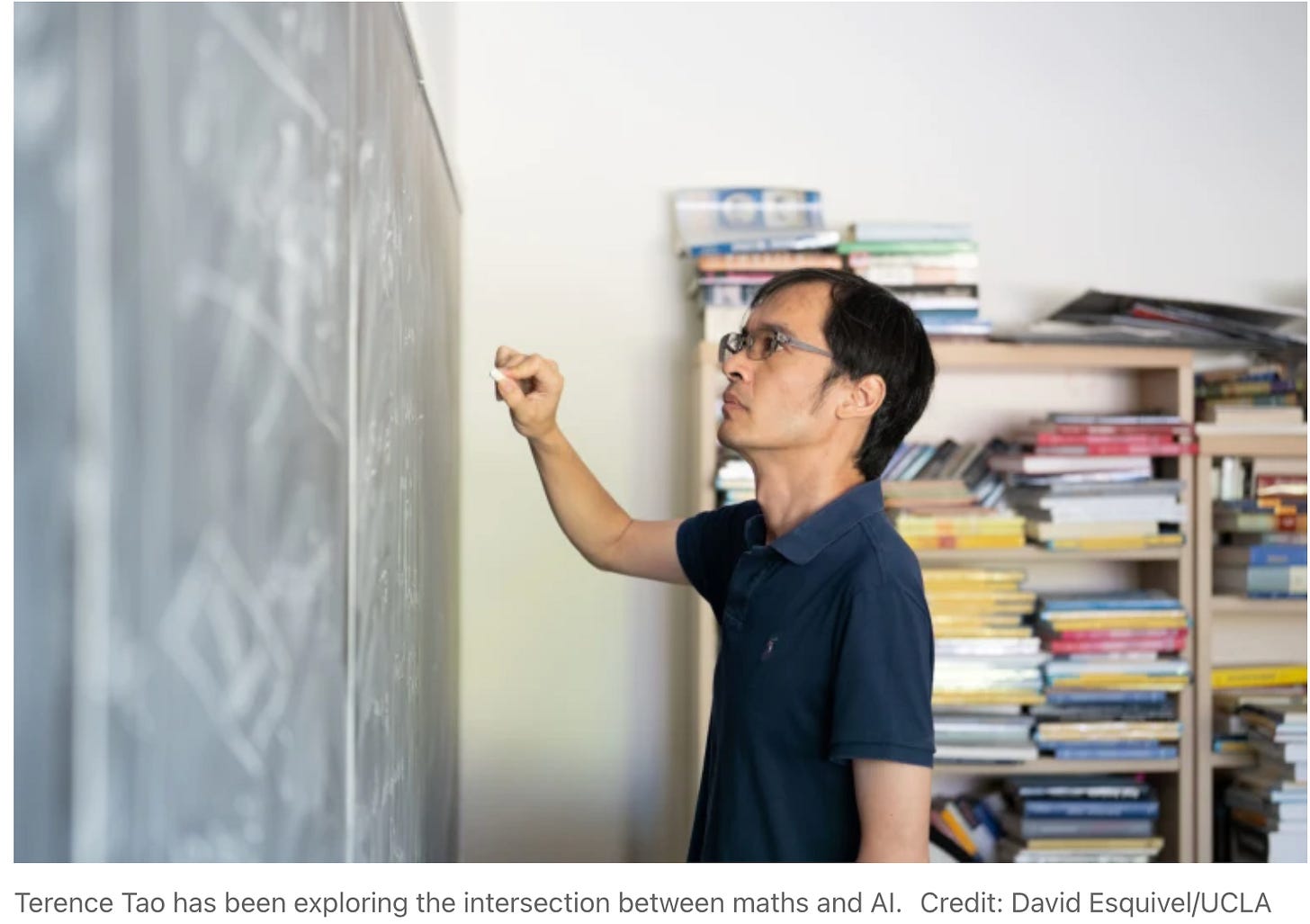

Nature recently interviewed Terence Tao on this exact shift. The headline is blunt: “The job description is changing.” Tao is not saying mathematicians are about to vanish into a GPU cluster. The Nature piece frames the current moment more carefully: many researchers still think the hype is overdone, but AI has moved in the past year from solving school-level problems to becoming useful in research mathematicians’ daily work.

That is the sane middle position.

Not apocalypse.

Not dismissal.

A change in the work.

The strong mathematician does not hand over thinking to the model and wait for truth to emerge like a croissant from an oven. That is how you get a beautiful hallucination with equation numbers.

The strong mathematician uses the model as an amplifier.

Try this construction.

Check this special case.

Find an analogy.

Rewrite this proof.

What happens if we replace the geometric progression?

Can this exponential bound become polynomial?

Where does the argument break?

Give me a route I have not tried.

This is not replacing the mathematician. It is changing the effective speed at which mathematical exploration happens.

And that, frankly, is much more disruptive than replacement.

Replacement is a simple story. We all know what to do with it: panic, deny, or write a TED talk.

Amplification is harder. It means the profession remains, but the baseline rises. The student who once needed several months to stumble into a research-level trick may now have a machine that can propose five routes before lunch. The expert who knows how to evaluate those routes becomes much more powerful. The beginner who blindly trusts the model becomes a decorative casualty.

This is the same pattern appearing everywhere else in AI.

In coding, the best engineer is not the one who lets the agent write random code. It is the one who knows what should be built and can tell when the model has produced a beautifully formatted grenade.

In medicine, the point is not to replace doctors with chatbots. It is to give doctors an extra diagnostic mind that does not get tired, then hold someone accountable for the final decision.

In mathematics, the point is not that AI becomes Euler in a box.

The point is that a human mathematician with AI may now explore the search space differently.

Faster.

Wider.

Sometimes stranger.

And occasionally, as Gowers’s post suggests, well enough to generate a piece of work at the level of a solid dissertation chapter.

That is the part people should take seriously.

Not because one story proves that AI has conquered mathematics. It does not. The subject is full of cliffs, traps, false proofs, hidden assumptions, and problems that remain hard precisely because every obvious route has already been mined, dynamited, and marked with warning signs.

But this story shows the floor has moved.

The old comfort was that LLMs could imitate mathematical language but not contribute mathematically.

That comfort is now looking thin.

The new question is not whether AI can ever help with real mathematics. It can.

The question is what mathematical work looks like when competent researchers have tireless assistants that can try arguments, generate drafts, search literature, suggest constructions, and occasionally find the move nobody bothered to test.

My guess is that the profession splits.

The top mathematicians become faster.

The careful mathematicians become more ambitious.

The lazy ones drown in hallucinations.

And the training pipeline gets very strange, because if every PhD student has access to something that can produce plausible research-level arguments, then we need to teach not only proof, but model supervision: how to interrogate a generated proof, how to detect slop, how to formalize, how to certify, how to decide whether a machine-produced idea is trivial, known, false, or quietly brilliant.

That may become one of the main skills.

Not doing mathematics instead of AI.

Doing mathematics with AI without becoming an idiot.

That is a less dramatic future than “the machines replace us.”

It is also more plausible, more useful, and more dangerous.

Because if Gowers is right, the bar has changed. It is no longer enough for a problem to be open. It must be hard enough that a strong model cannot solve it quickly.

A few years ago, that sentence would have sounded absurd.

Now it sounds like a research-management problem.