AI Will Not Flatten Your Org Chart. It Will Reveal Why You Had One in the First Place

AI will not just speed up the company. It will decide whether the company still deserves to exist in its current form.

For a brief, glorious moment, the business world convinced itself that AI transformation would be easy.

Buy everyone ChatGPT.

Add Copilot.

Run a pilot.

Make a slide with the word “agentic.”

Tell the board you are now an AI-first organization.

Wait for margins, speed, and competitive advantage to arrive like room service.

Instead, what arrived was a much more educational result.

A few employees became absurdly productive.

The rest of the company became slightly more chaotic.

And the CEO, staring at a dashboard full of licenses, pilots, and glowing anecdotes, discovered that none of this had yet added up to the kind of institutional advantage that justifies all the fuss.

This is not because the models are bad.

It is because most companies have committed a very old error in a very modern costume: they changed the motor, but not the factory.

That, in essence, is the core argument running through two of the more interesting recent pieces on AI transformation. One is the a16z essay, Institutional AI vs. Individual AI, which argues that the biggest gains today are happening at the level of individual workers rather than the firms they work for. The other is the Harvard Business Review piece The “Last Mile” Problem Slowing AI Transformation, which says, in the polite voice of people who have seen many expensive executive mistakes, that companies have spent billions on AI pilots and still failed to produce the productivity revolution they were promised.

Both are saying the same thing, really.

AI is not a feature rollout.

It is an organizational redesign problem.

And most firms are still treating it like software procurement.

The historical mistake is older than Silicon Valley

The a16z piece makes a comparison so useful it should be tattooed onto the conference room table of every company currently “exploring AI opportunities.”

In the 1890s, textile mills began replacing steam engines with electric motors. Sensible enough. Electricity was better. Cleaner. More flexible. More modern. And yet for decades, the productivity gains were strangely disappointing.

Why?

Because the factories were still built like steam factories.

They had simply attached a better power source to an old layout. It was only when factories were redesigned from the ground up in the 1920s — with new floor plans, distributed motors, new workflows, and new labor roles — that electrification finally produced its full effect.

This, according to a16z, is where we are now. We have electricity. We have not yet rebuilt the factory. And so AI often makes the old organization a bit faster, a bit noisier, and in some cases even worse, because it amplifies outputs faster than the system can absorb them.

Which is exactly what you would expect if the individual suddenly becomes superhuman while the process around them remains resolutely bureaucratic.

The myth of the productive individual

Modern enterprise AI is still mostly sold as a story about individual uplift.

The employee drafts faster.

The analyst summarizes faster.

The support rep replies faster.

The engineer ships faster.

The marketer ideates faster.

The lawyer reviews faster.

All true, sometimes spectacularly so.

But companies are not made of isolated bursts of brilliance. They are made of coordination. And coordination is where most of the gains are currently going to die.

This is one of the sharpest points in the a16z essay: each employee is often sitting inside their own private AI bubble, armed with their own prompts, their own custom workflows, their own side databases, their own hacked-together tools, and their own peculiar understanding of what the machine is for. At the level of the person, this feels empowering. At the level of the firm, it looks like a thousand agents rowing in different directions while management calls the splashing “transformation.”

That is not leverage.

That is distributed improvisation.

And distributed improvisation, while charming in jazz, is not generally how you run a serious company.

AI does not solve fragmentation. It industrializes it

If each person in the company becomes 30% more productive in their own local way, but their outputs still do not line up, the company itself may not become more productive at all.

In fact, it can become less coherent.

One of the ugly little truths of this era is that AI can accelerate fragmentation much more quickly than it accelerates institutional alignment. People can now produce more analysis, more presentations, more mockups, more code, more recommendations, more reports, more internal proposals, and more polished nonsense than ever before. Which means that unless the company has a way to organize, filter, compare, and route that explosion of machine-amplified work, it simply gets buried alive in a larger pile of shinier sludge.

This is where the “signal versus noise” problem becomes central.

Because the real output of AI is not automatically value. Very often it is inventory. Text inventory. Slide inventory. Design inventory. Strategy inventory. Hypothesis inventory. Synthetic output with an excellent haircut.

And the executive challenge of the next decade may not be “how do we generate more?” but “how do we distinguish signal from AI-polished slop before the slop eats the entire decision system?”

That is not a theoretical concern. It is already happening in deal flow, content, corporate reporting, and internal planning. The machine makes more possible. It does not, by itself, make more important.

The Harvard diagnosis is even more brutal because it is less dramatic

The HBR article is useful because it strips away the startup theater and says, in crisp institutional language, that the revolution has stalled because of organizational design.

Not because the models are weak.

Not because the data is uniformly terrible.

Not because the vendors lied more than usual.

Because the company itself is not built to metabolize AI.

Their diagnosis lands on seven recurring causes. And each one is, frankly, a small act of violence against corporate self-esteem.

There is the multiplication of pilots without real scaling. One bank built more than 250 LLM apps. A fashion company automated 18,000 finance processes. And yet the gains remained local rather than systemic. This is the corporate equivalent of buying 250 gym memberships and still being unable to climb the stairs.

Then there is the productivity gap: widespread tool usage with no measurable business improvement. A company reports near-universal Copilot use, yet a department cannot show any metric that actually improved. This is because saved time does not automatically become value. It often becomes more email.

Then there is process debt, which is really technical debt’s uglier cousin. Companies layer AI on top of workflows that were already confused, redundant, over-approving, and designed for a pre-machine bureaucracy. So instead of redesigning the process, they just teach the machine to move through the same swamp faster.

That is not transformation. That is motorized swamp traversal.

Tribal knowledge is not a moat. It is a bottleneck wearing a crown

One of the HBR piece’s smartest points is its treatment of “tribal knowledge.”

Every large company has it. It lives in the heads of certain people who know how things really work. Not how the org chart says they work. Not how the documentation claims they work. How they actually work when something weird happens on a Tuesday and a client is angry and finance wants a number by 4 p.m.

Historically, this has made those people valuable.

Now it makes the organization fragile.

Because AI cannot operationalize what the company itself has refused to formalize. If the mission-critical knowledge remains trapped in people rather than encoded into systems, workflows, or models, then the organization is not transformable. It is merely staffed.

And here the politics become deliciously awkward, because the people who carry that knowledge are often the same people most threatened by translating it into machine-readable process. Quite understandably. If your authority came from being the human exception handler in a badly designed hierarchy, then a world in which that logic becomes encoded and shared is not your natural utopia.

The HBR solution is sensible: create roles that explicitly bridge business expertise and technical systems. Call them AI process architects, business technologists, or something equally depressing. Their job is to translate tacit expertise into operational design.

This matters because AI transformation is not mostly about models.

It is about making the company legible to itself.

The biggest fantasy in AI is that “the prompt” is the interface of the future

The a16z piece makes a point that deserves to be repeated until people stop building entire operating models around chat boxes.

Prompting is not the end state.

Prompting AGI, if we ever get anywhere near AGI, is rather like attaching an electric motor to a steam loom and then congratulating yourself for being early. It may be useful for a while. It is not the structure of the future.

The most valuable work an institutional AI system will do is not work someone thought to ask for. It is work nobody thought to ask for at all.

It finds the risk nobody named.

The counterparty nobody noticed.

The sales opportunity nobody saw.

The operational drift nobody had time to inspect.

The pattern nobody believed was there because nobody had enough bandwidth to look.

This is the truly important distinction between individual AI and institutional AI.

Individual AI answers your question faster.

Institutional AI notices the question you failed to ask.

That is when it stops being a chatbot and starts becoming an operating system for the company.

Cost reduction is the kindergarten level

This leads naturally to the three levels of the AI-native company, which are best thought of not as maturity stages in a consulting diagram but as progressively more dangerous ambitions.

The first level is cost optimization.

This is where most firms start, because it is the easiest story to sell internally and the easiest one to model in a spreadsheet. Replace parts of coding, contract review, support, bookkeeping, design, marketing, and all the rest with agents or AI-assisted workflows. If enough office work is repetitive and if a substantial share of it can be decomposed into automatable pieces, the cost savings can become very real very quickly.

This is also the level where everyone feels clever and nobody is safe.

Because cost optimization is not a moat. It is table stakes. Once every competitor has access to the same models and the same baseline automations, the advantage vanishes. You do not win a market forever because you cut payroll first. You merely buy yourself time.

Which is why staying here is strategically dangerous.

The second level is where companies start behaving like they actually want to live

The second level is business scaling through new capability.

This is where AI stops merely shaving time and starts changing what markets you can enter, what products you can offer, and what forms of service become economically viable.

A localization agent does not just save translation budget. It lets a product expand into 15 markets with usable support and region-specific messaging.

A consulting firm does not just draft faster reports. It can now deliver implementation, monitoring, and continuous optimization rather than advice alone.

A software company does not just personalize emails. It starts hyper-personalizing the product itself in real time.

This is where AI begins to create upside rather than just reducing friction.

And if you are asking what the long-term protection is for a company stuck at level one, this is the answer: not much. The firms that learn to use AI to open new markets, compose new service layers, and build domain-specific intelligence will have a structural advantage over the ones still proudly announcing that invoicing is now 18% faster.

Faster invoicing is not destiny.

New capability is.

And then there is level three, where things stop being “AI adoption” and start becoming cybernetics

The third level is the one most people still find slightly insane.

Call it the cybernetic operating system, or Cybos if one is in a hurry. This is the stage where AI is no longer a collection of tools sprinkled around the firm. It becomes the coordination logic of the firm itself. The system continuously observes the organization, takes in feedback, updates its internal picture, routes work, and improves itself over time.

At that point AI is not merely helping management.

It is becoming a large part of what management is.

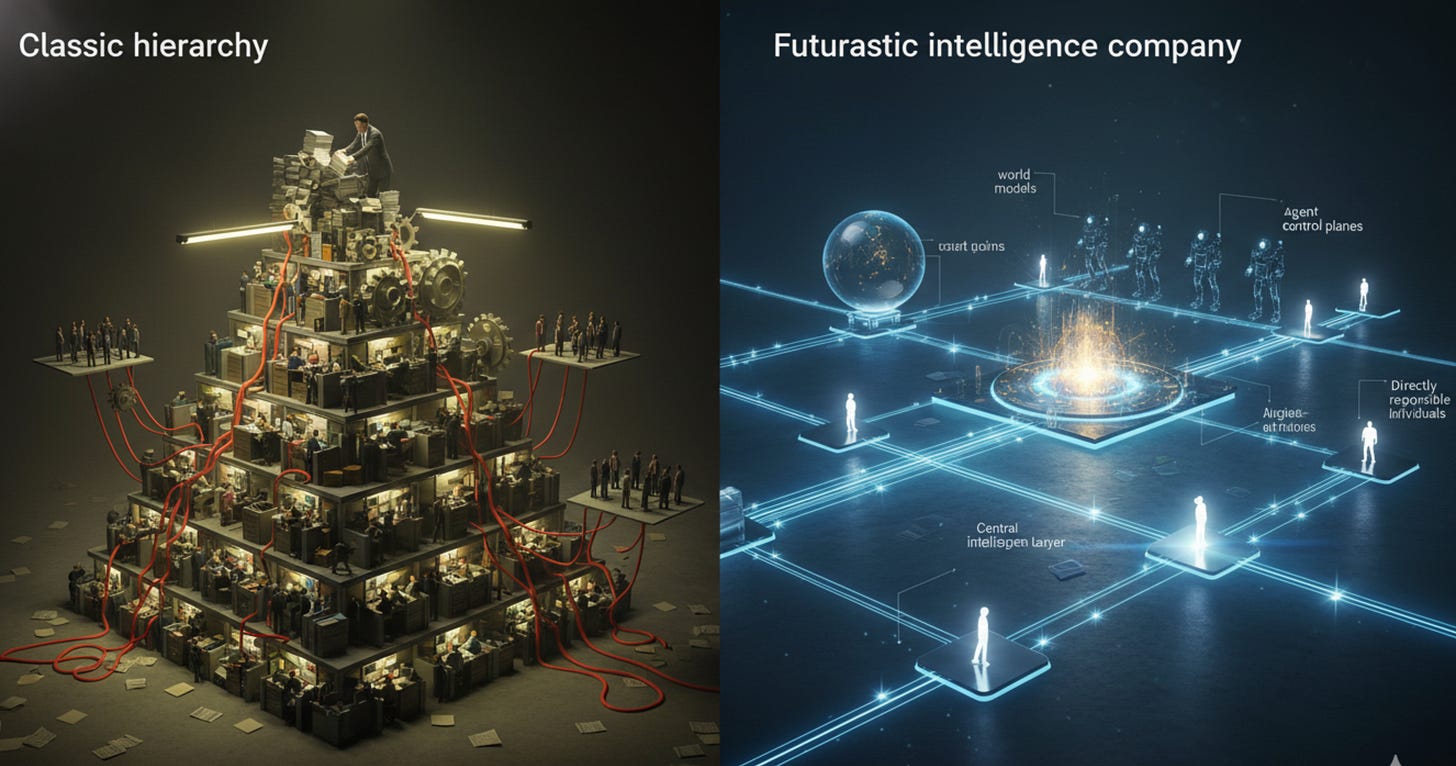

This is why Jack Dorsey’s recent essay from Block, From Hierarchy to Intelligence, is so interesting. It is not another “everyone gets a copilot” story. It is an attempt to rethink the company itself as an intelligence system rather than a hierarchy.

The essay is built around a historical argument that is, annoyingly, very good. For roughly two thousand years, from Roman military structures through Prussian general staff design, from railroads through modern corporations, large organizations have all been solving the same problem in slightly different clothes: how do you coordinate lots of people across limited communication bandwidth?

The answer, again and again, was hierarchy.

Small units.

Nested control.

Managers relaying information up and down the chain.

Line and staff functions.

Functional pyramids.

Matrix overlays.

Cross-functional patches.

Different eras, same core problem: information routing under human cognitive limits.

And Block’s claim is that AI may be the first real alternative to that arrangement.

The company as an intelligence, not a hierarchy

Dorsey’s argument is that most companies still assume humans must remain the primary routing layer for information. Managers coordinate. Middle management aggregates. Teams push status upward and instruction downward. The org chart exists because the organization needs a way to move knowledge, decisions, and priorities through the system.

But what if the system itself could maintain a continuously updated model of the company?

What if there were a company world model that tracked decisions, code, discussions, plans, blockers, progress, resource allocation, and performance as machine-readable artifacts?

What if there were also a customer world model, built from rich, honest transactional signals?

And what if an intelligence layer could compose capabilities into solutions without waiting for product managers and org charts to manually carry the problem around the building?

That is the radical part of the Block piece.

Not “AI makes employees faster.”

But: AI can replace what hierarchy does.

If that sounds grandiose, it should. It is grandiose. But it is also one of the few serious descriptions of what third-level transformation might actually look like in practice.

Not an AI feature set.

An intelligence architecture for the company itself.

Capabilities, world models, intelligence, interfaces

Block’s proposed redesign is useful precisely because it does not begin with apps.

It begins with four layers.

First, capabilities: the atomic financial primitives such as lending, payments, payroll, banking, card issuance, and so on. Not products. Building blocks.

Second, world models: one for the company and one for the customer. The company world model tracks operations and internal reality. The customer world model captures merchant and user behavior from dense financial data.

Third, an intelligence layer: the thing that composes capabilities into solutions at the right moment, based on those world models.

Fourth, interfaces: the surfaces through which those solutions are delivered. Cash App, Square, Afterpay, hardware, software, whatever else.

This is not a product roadmap. It is closer to a cybernetic stack.

And the really provocative part is that, in this design, the backlog is generated by reality itself. If the intelligence layer tries to solve a customer problem and cannot because the capability does not exist, that failure becomes the roadmap signal.

Which is a much harsher and more honest product strategy than most companies are used to.

What happens to people in this model?

This is where the piece becomes politically radioactive.

If intelligence moves into the system, then the human role changes. The organization normalizes around three roles: individual contributors who build the layers, directly responsible individuals who own cross-cutting outcomes, and player-coaches who combine craft with development of people.

What disappears, or at least shrinks dramatically, is the permanent layer of middle management whose historic role was largely information routing, alignment meetings, and priority negotiation.

That is why this argument matters far beyond Block.

Because if AI can increasingly carry the context that hierarchy used to carry, then a very large amount of modern management may turn out to have been a temporary communication technology.

Useful for two thousand years, yes. Permanent, no.

The real question every company now faces

This is the simplest and most brutal test of all:

What does your company understand that is genuinely hard to understand, and is that understanding getting deeper every day?

If the answer is “nothing,” then AI is just a cost story. You optimize. You cut. You get a few quarters of margin delight. Then someone with a better system absorbs you.

If the answer is “something deep, compounding, and structurally valuable,” then AI becomes much more than augmentation. It becomes the mechanism through which that understanding is turned into speed, product advantage, and organizational compounding.

This is why the phrase “AI transformation” is misleadingly small.

What is actually happening is a fight between two kinds of companies:

those treating AI as a productivity accessory for the existing structure, and those using AI as an excuse — finally — to redesign the structure itself.

The first group will save time.

The second group may become something different altogether.

We already have electricity. Rebuild the factory.

That is the conclusion, and it is not especially subtle.

The model is not the main bottleneck.

The data is not always the main bottleneck.

The real bottleneck, increasingly, is the organization design wrapped around the technology.

How knowledge moves.

How decisions are made.

How capabilities are composed.

How agents are governed.

How signal is separated from sludge.

How process is redesigned rather than patched.

If you keep the old factory and add a clever motor, you get a louder old factory.

If you rebuild the factory, you may get a new kind of company.

And the firms that remain stuck at level one — cost optimization, local productivity, scattered copilots, ornamental pilots, and board decks full of adjectives — should probably ask themselves a very uncomfortable question:

what exactly is protecting them from competitors already building levels two and three?

Because history is not generally kind to factories that electrify early and redesign late.

And AI, if used seriously, is not here to make your org chart prettier.

It is here to make it negotiable.